If you spend time around young children, sooner or later you’ll end up playing the game Chutes and Ladders. This game actually has quite an interesting history, dating back to ancient India. The Milton-Bradley version dates from the 1940s.

The game’s history is probably the most interesting thing about the game, beause it is utterly devoid of strategy or technique. The players’ only interaction with the game is to spin a spinner that provides a random number from 1 through 6; there is no skill aspect, there are no decisions to be made. And certainly, while perfectly enjoyable as a family pastime that brings people together, in and of itself the game offers little intellectual distraction.

This intellectual idle-time led me to think about the nature of the board. I wondered if the game, being entirely stochastic, had any hidden patterns within. After all, it’s reasonable to assume that Milton-Bradley didn’t have access to any sort of automated analysis of their game back in 1943. I wondered things like:

- What’s the average number of spins to win?

- How many chutes and ladders will a user typically hit?

- Are the chutes and ladders balanced?

- Are some squares hit more often than others?

What’s neat is the nature of the game lends itself well to numerical analysis. There’s no human component that requires estimation or approximation. Literally the only action a player takes is to spin a spinner. Why not use Monte Carlo analysis to play bulk trials of the game and look for answers?

Coding this in Python was fairly straightforward. Although not the most performant language, Python’s excellent visualization tools make it the language of choice for this project. Using joblib to parallelize the task meant that I could simulate 500,000 single-player games in 7 seconds. (Why single-player? The game doesn’t change at all by the number of players. There are no exclusions or conflicts or indeed any alterations at all, from a numerical analysis perspective.)

The results are kind of interesting if you’re the sort of person who wonders about these sorts of things.

For half a million games, I saw this:

7.633320899999944 seconds elapsed time

Minimum number of spins to win: 7

Maximum number of spins to win: 446

Arithmetic mean number of ladders hit by player: 3

Arithmetic mean number of chutes hit by player: 4

Arithmetic mean of spins to win: 40.092362

Median of spins to win: 33.0

Standard deviation = 25.522641855046196

There’s more information to be found in the visualizations:

Noting the average number of spins to win the game is 40, the cumulative number of spins to win a game rises in a smooth curve. You can see, for example, that the probability of concluding a game between 50 and 60 spins is about 81-82%, and that most games are won under 40 spins (the median number-of-spins-to-win being 33).

The heatmaps of board positions are particularly interesting to me. There are two heatmaps of note:

- a heatmap of where the user lands initially after spinning (i.e. before following any chute/ladder)

- a heatmap of where the user ends up at the conclusion of their turn (i.e. after following any chute/ladder)

The former shows two notable darker/cooler bands across the board: along the bottom, and in the upper third. These cooler bands are areas of infrequent landing. Once chutes/ladders are taken into account, however, the picture becomes much noisier. The squares with ‘0’ values may seem counter-intuitive until you realize those are the heads of chutes or bottoms of ladders — no turn ever ‘ends’ on these squares. This heatmap shows some particular hotspots such as the top of the “big ladder” and the bottom of Square 48’s yellow chute. Also note how it’s very, very common to get stuck at square 99, waiting for an exact spin of ‘1’ to finally win the game.

If you care to try this out locally, the source code is on Github.

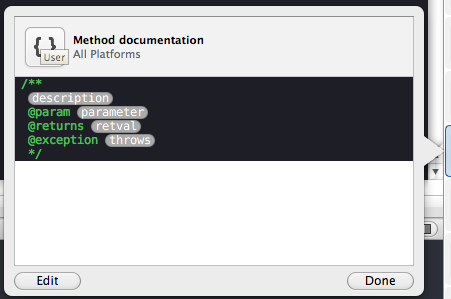

Activate the right-hand Utilities drawer and in the bottom of it, click on the curly braces {} to activate the Code Snippet Library.

Activate the right-hand Utilities drawer and in the bottom of it, click on the curly braces {} to activate the Code Snippet Library.